- 2026-May-08

The Roadmap to Crafting an Efficient Face Detection System in the Digital Age

Written by Purushothama Reddy Bandla Jan 2, 2024 9:52:35 AM

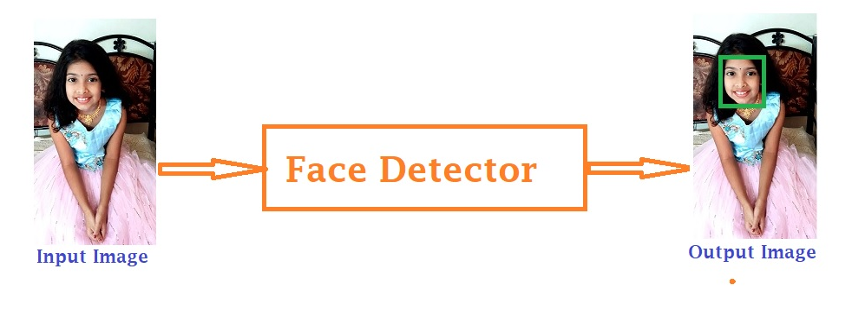

In an era defined by digital connectivity, the ubiquitous presence of face detection technology has seamlessly woven itself into the fabric of diverse applications, ranging from surveillance and biometrics to photography, security systems, and the whimsical world of augmented reality filters on social media platforms. The precision and speed with which systems identify faces in images or videos have emerged as pivotal factors across various industries. This article aims to unravel the intricacies involved in constructing a proficient face detection system.

Decoding Face Detection

At the heart of computer vision, face detection stands as a crucial task wherein computer programs skillfully identify human faces and pinpoint their locations within images or video streams. A proficient facial detector serves as the cornerstone for an array of face-related applications, including facial landmark detection, gender classification, face tracking, and the ever-evolving realm of face recognition. To achieve this, face detectors must exhibit resilience in the face of challenges such as variations in pose, illumination, viewpoint, expression, scale, skin color, occlusions, disguises, makeup, and more. The roadmap to an efficient face detection system involves a series of meticulously orchestrated steps and a constellation of key components.

Essential Components of a Robust Face Detection System

Data Collection: The journey commences with the meticulous assembly of high-quality datasets, embodying diverse facial expressions, angles, lighting conditions, and demographics. This diversity proves instrumental in training algorithms effectively.

Algorithm Selection: Critical to the process is the selection of an apt face detection algorithm, which manifests as the orchestrator directing the symphony of face identification. Options span classical techniques such as:

- Haar Cascades (2001): Pioneered in 2001, Haar Cascades represent a classical approach to face detection. Leveraging a cascade of simple classifiers, this method excels in speed but may encounter challenges in detecting faces under certain conditions.

- DLib-HOG (2005): Introduced in 2005, DLib-HOG relies on Histogram of Oriented Gradients (HOG) for face detection. While robust, it may exhibit limitations in certain scenarios, potentially missing faces or introducing delays.

Modern deep learning-based approaches include:

- Single Shot Multibox Detector (SSD) (2015)

- Multi-Task Cascaded Convolutional Neural Network (MTCNN) (2016)

- Dual Shot Face Detector (DSFD) (2019)

- RetinaFace-Resnet (2019)

- RetinaFace MobilenetV1 (2019)

- MediaPipe (2019)

- YuNet (2021)

Each algorithm carries its unique strengths and weaknesses, making the choice contingent upon the specific demands of the application at hand.

DSFD and Retinaface-resnet50 models gives better detection accuracy, but they are very slow and may not be suitable for real-time inference.

MediaPipe model gives the best detection speed but with missing faces in uncontrolled conditions.

YuNet and RetinaFace-Mobilenetv1 models are very fast with real-time inference while still maintaining decent accuracy.

Preprocessing Techniques: Fine-tuning the efficiency and accuracy of face detection algorithms involves judicious preprocessing steps, encompassing image normalization, resizing, and grayscale conversion.

Training the Model: Machine learning, particularly the realm of deep learning, takes center stage as the model undergoes rigorous training on the meticulously curated dataset. The goal is to optimize the model for accurate face detection while minimizing false positives.

Optimization: Real-time performance mandates the employment of optimization techniques like quantization, pruning, and model compression. These techniques streamline the model's size and computational demands without compromising accuracy.

Hardware Considerations for Face Detection Applications:

Selecting the appropriate hardware is a critical decision, influenced by several factors including the scale of deployment, required accuracy, real-time processing needs, and budget constraints. Below are some commonly used hardware options for face detection applications:

- CPUs (Central Processing Units):

- General Purpose CPUs: Suitable for smaller-scale deployments or development purposes, offering flexibility but may lack the necessary performance for real-time processing in larger systems.

- GPUs (Graphics Processing Units):

- NVIDIA GPUs: Widely employed for deep learning tasks, including face recognition. Their parallel processing capabilities accelerate neural network computations, enhancing performance for both training and inference.

- TPUs (Tensor Processing Units):

- Google's Tensor Processing Units: Optimized for AI workloads, including deep learning inference tasks. TPUs offer high throughput and efficiency for inference in large-scale applications.

- Edge AI Processors:

- ASICs (Application-Specific Integrated Circuits): Custom-designed chips optimized for specific tasks. ASICs can provide high efficiency and speed for face recognition tasks, often utilized in edge devices for real-time inference.

- FPGAs (Field-Programmable Gate Arrays): Configurable hardware that can be reprogrammed for specific tasks. FPGAs offer flexibility and can be tailored for face recognition algorithms.

- Dedicated AI Chips:

- NVIDIA Jetson Series: Compact embedded AI computing platforms suitable for edge devices. They offer GPU acceleration for deep learning tasks, including face recognition, in devices like cameras or IoT devices.

- Intel Movidius Neural Compute Stick: Low-power, USB-based AI accelerators suitable for edge computing. They provide hardware acceleration for AI inference tasks.

- Cloud-Based Solutions:

- Cloud-based GPUs and TPUs: Ideal for applications where real-time processing is not essential. Cloud services offer scalability and computing power without the need for on-premises hardware.

Careful consideration of these hardware options is paramount, ensuring that the selected hardware aligns with the specific requirements and constraints of the face detection application, whether it be for real-time processing, edge computing, or cloud-based solutions.

Challenges and Solutions

- Tackling Variability in Conditions: Addressing the multifaceted challenges posed by lighting, angles, facial expressions, and occlusions demands resilient algorithms and a rich tapestry of training data.

- Achieving Real-Time Performance: The quest for real-time face detection necessitates a delicate balance between algorithmic optimization and model speed without compromising accuracy.

- Privacy and Ethical Concerns: The development of systems prioritizing privacy by design and adhering to ethical guidelines in facial data usage emerges as a critical consideration.

Future Trends and Applications

- Advancements in Deep Learning: Anticipated strides in deep learning architectures hold the promise of further elevating the accuracy and efficiency of face detection systems.

- Edge Computing: The migration of computation closer to the data source, epitomized by edge computing, is poised to enhance processing speed and reduce latency.

- Ethical AI: An increased emphasis on ethical considerations throughout the development and deployment phases ensures privacy, fairness, and transparency in the realm of face detection systems.

To Summarize

The construction of an efficient face detection system seamlessly integrates cutting-edge technology, meticulous data curation, algorithmic finesse, and ethical deliberation. As technology evolves, the pursuit of accuracy, efficiency, and ethical standards in face detection remains paramount, influencing diverse industries and societal dimensions. Stay tuned for deeper insights into the ever-evolving landscape of AI and its far-reaching applications.

About the Author

Purushothama Reddy Bandla is a software architect/developer and has pursued his master’s in VLSI Design. He has over 15 years of embedded and automotive industry experience with an interest in building new end-to-end AI solutions that accelerate human well-being and developing different autonomous models for human-friendly interactive machines in the automotive and agriculture fields.

.png?width=774&height=812&name=Master%20final%201%20(1).png)